The Event Horizon of Change: Why the Future Is Arriving Faster Than You Think

Where I explore how accelerating AI transformations are compressing time itself, and what that means for every leader, builder, and institution caught in the pull

I’m a fan of Christopher Nolan’s Interstellar. In it, there’s a scene where Cooper and his crew land on Miller’s planet, where the gravitational pull of a nearby black hole distorts time so severely that one hour on the surface equals seven years back on Earth. They spend what feels like minutes collecting data. When they return to the orbiting ship, twenty-three years have passed. Their colleague has aged. Their children have grown up. The world they left behind no longer exists.

We are living through our own version of this time dilation, except nobody gets to stay on the ship.

The gravitational force warping our timeline isn’t a black hole. It’s artificial intelligence. And the distortion isn’t theoretical. It’s measurable in months, not decades. The intervals between transformative capability shifts are compressing so rapidly that our institutions, our industries, and our individual capacity for adaptation are being stretched past their design limits. I call this phenomenon cascading transformations, and I believe it represents the most consequential structural dynamic in technology today, not because of what AI can do, but because of what the rate of AI advancement does to everything around it, including us.

If this sounds interesting to you, please read on…

This substack, LawDroid Manifesto, is here to keep you in the loop about the intersection of AI and the law. Please share this article with your friends and colleagues and remember to tell me what you think in the comments below.

Cascading Transformations: Everything, Everywhere, All At Once

To understand what cascading transformations are, let’s trace the sequence of events.

In late 2022, OpenAI released ChatGPT. It was a chat interface, a text box and a response. Impressive, novel, but bounded. It didn’t, on its face, look like the kind of thing that should reshape entire industries. It was an ingenious parlor trick.

Then, in 2023, came retrieval-augmented generation and fine-tuned models. Then, in 2024, basic agentic capabilities: AI that could use tools, browse the web, execute multi-step reasoning. Then, in 2025, autonomous agents, capable of planning, adapting, and self-correcting. Then, in 2026, the orchestration of multiple agents working in concert, approximating something that begins to look like an autonomous organization. And threading through all of it, the expansion of context windows, transforming AI from a system that could hold a conversation into an engine that could ingest, synthesize, and reason across vast bodies of information.

We’ve leapt across the five stages of AI evolution in almost a single bound:

Stage Level 1: Chatbots, AI with conversational language

Stage Level 2: Reasoners, human-level problem solving

Stage Level 3: Agents, systems that can take actions

Stage Level 4: Innovators, AI that can aid in invention

Stage Level 5: Organizations: AI that can do the work of an organization

Each of these developments, taken individually, looks like a reasonable incremental step. A better model. A new capability. But cascading transformations don’t operate linearly. Each capability multiplies the impact of every capability that came before it.

An agent that can use tools is interesting. An agent that can use tools and reason across a million tokens of context and coordinate with other agents and operate autonomously within defined guardrails, that’s not an incremental improvement. That’s a phase transition. And the evidence is everywhere.

A year ago, Anthropic published research on Claude’s capacity for extended autonomous operation, running for hours without human intervention, navigating complex multi-step workflows. OpenAI announced its Operator agent framework. Google DeepMind’s Gemini models began demonstrating agentic reasoning across modalities. These are tectonic plates shifting in real time.

Meanwhile, the research frontier accelerates independently. Breakthroughs in mixture-of-experts architectures, retrieval-augmented reasoning, reinforcement learning from human feedback, and long-context processing aren’t happening sequentially, they’re happening simultaneously, each one creating downstream butterfly effects that compress the next cycle of innovation further.

When Breathing Room Disappears

In most technological revolutions, there’s breathing room. The printing press took decades to reshape European intellectual life. The internet took roughly fifteen years to move from curiosity to infrastructure. Even the smartphone, which felt fast at the time, gave industries about a decade to adapt from the iPhone’s launch to the mobile-first economy.

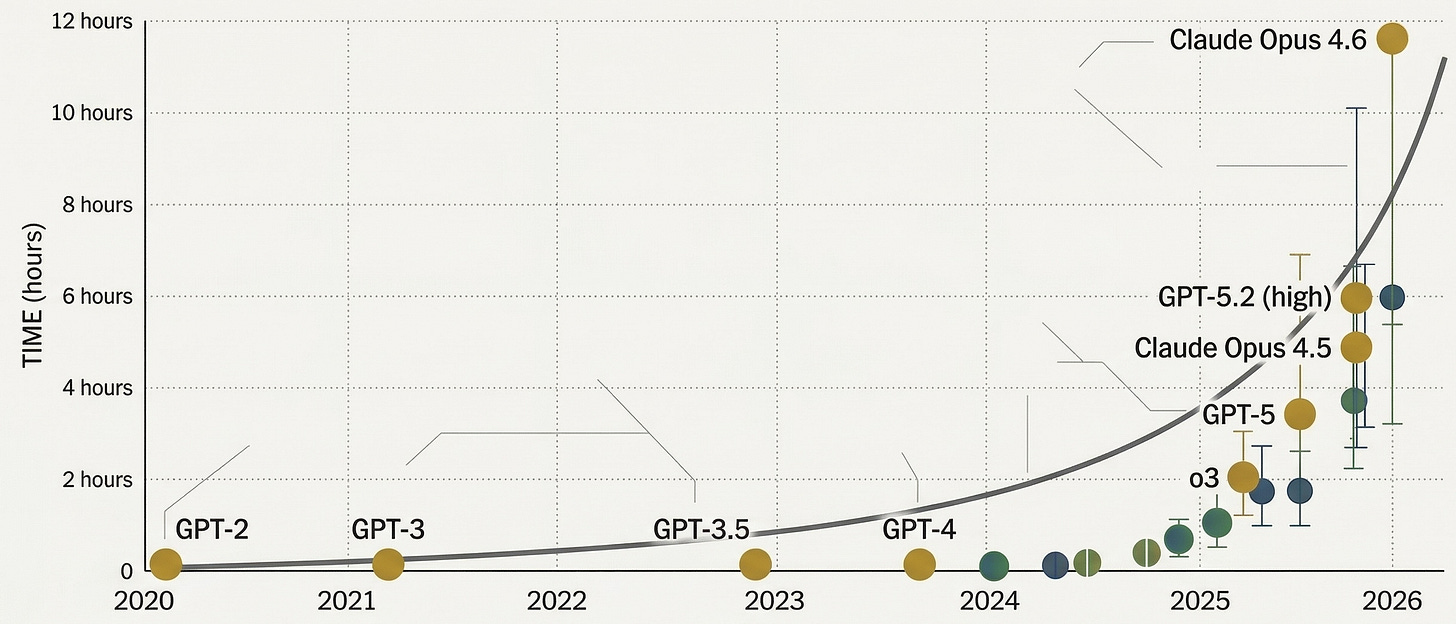

With AI, that buffer has evaporated. The distance between “chatbot” and “autonomous organization” is not a decade. It is, by any reasonable assessment, three to four years. And the rate of cascading transformations is not linear; it’s asymptotic. The curve bends sharply toward vertical.

This is where the Interstellar analogy comes in. On Miller’s planet, the crew felt like they had time. The waves looked manageable from a distance. It was only upon return that the devastation of time dilation became apparent. Right now, organizations across every sector are standing on their own version of Miller’s planet. The waves are coming. And every hour of delay costs disproportionately more than the last.

Consider what has already shifted in legal services alone. Three years ago, “AI for law” meant document review automation and basic contract analysis. Today, we’re deploying AI-powered legal information assistants that provide guided access to justice at scale, building autonomous workflows for court systems, and watching the emergence of AI-native legal organizations that operate with a fraction of traditional overhead. The firms and legal aid organizations that moved early aren’t just ahead, they’re operating in a fundamentally different competitive reality than those still deliberating.

The Human Cost of Cascading Transformations

Here is the dimension that receives the least attention and deserves the most: what sustained, accelerating technological disruption does to people.

There is an emerging body of research on what psychologists are beginning to call change fatigue, the cumulative cognitive and emotional toll of continuous adaptation without recovery. A 2024 study from the American Psychological Association found that technology-related workplace stress had increased significantly over two years, driven not by any single disruption but by the relentlessness of sequential disruptions. Gartner’s research tells us that employee willingness to support organizational change has dropped to historic lows.

This is not a productivity problem. It is a human sustainability problem. When the rate of cascading transformations exceeds the rate at which individuals can integrate, process, and find meaning in that change, something breaks. Not the technology, us. Decision fatigue becomes the default operating mode. Strategic paralysis masquerades as prudent deliberation. The most capable professionals in the room aren’t resistant to change, they’re exhausted by it.

And this is the paradox at the heart of our moment. The technology that promises to reduce cognitive burden is, by the very pace of its advancement, creating an unprecedented form of it. We cannot solve this by moving faster. We can only solve it by moving with greater intentionality.

Closing Thoughts

In Interstellar, Cooper makes a choice. He enters the black hole, not because he understands what’s on the other side, but because staying in orbit means watching everything he cares about slip further away. The event horizon demands commitment.

I believe we face an analogous decision. The cascading transformations I’ve described, the multiplicative capabilities, the compressing timeline, the human toll of perpetual disruption, are not going to slow down for strategic planning cycles or regulatory deliberation or professional comfort. The physics of this moment are pulling us forward whether we consent to it or not.

We must be deliberate about your trajectory. Purpose matters more when speed increases. Clarity matters more when complexity compounds. Alignment, between your technology, your values, and the people you serve, matters more when the margin for course correction shrinks with every passing quarter.

These cascading transformations will reshape our industry, our practice, and our careers. Are we building with enough intentionality to shape how that change lands? Or will we be the outside observer, watching decades pass through the window of an orbiting ship, wondering when the world became unrecognizable?

The event horizon doesn’t wait.

Neither should you.

If you found this article useful, you'll love the LawDroid AI Conference 2026. April 28–29, virtual, and completely free — two days of keynotes, panels, and workshops on AI and the legal profession. I'd love to see you there.